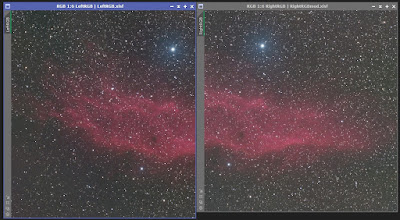

Here's the image at quarter-scale:

|

| 1/4 Scale Image |

Full-Scale image at AstroBin.

Where to even start with this? How about the data?

Originally there were 13.2 hours of data, but I came across a video in which someone explained how they use PixInsight's SubframeSelector process to cull bad frames. My approach to data culling has always been to keep all that aren't terribly bad, but for this project I thought I'd get tough. Using SFS led me to reject 3.6 hours of data! To be fair, about a third of that was because of my penchant for starting data collection before the end of twilight. There were very few visibly bad frames as viewed in Blink, so I'm going to call this approach "2 sigma" aggressive, in that it basically culls any frame that has FWHM, eccentricity, or median values more than two standard deviations above the mean. Note that those rejected frames might be perfectly fine in and of themselves, but relative to their cohort they are of significantly lesser quality. Frames with anomalously low star counts are also culled. An example of this is the set collected during the session that a smoke layer moved in and began obscuring stars in the late morning. Star count fell markedly and I removed frames.

Worth mentioning was the need to use WBPP's Grouping Keywords to make sure that light frames and their appropriate flats were processed together. This was the first time I used it, and it worked perfectly.

Also, I no longer use dark flats, or "flat darks," if you prefer. Only dark, flat, and bias frames are used for calibration. (Flat and dark frames are now taken for granted at Astrobin, it seems; it no longer asks if you use them.)

Now about the calibration frames, specifically the flats. It seems that most of the time my flat illumination was asymmetric for reasons I don't understand, and this gave the background modelization processing some problems. That big bright Polaris didn't help, either, nor did the fact that most of the image was nebulosity. My first pass used GradientCorrection and that left the right side with a green cast. After playing with that for a while I moved on to DynamicBackgroundExtraction. That didn't clear it up, either. After thinking about it for a while I reverted to AutomaticBackgroundExtractor with a 5th-order function and that did the job.

Next, those darn satellites. The first processing pass got most of them, but a few stuck around in weakened form. They should have been removed during light frame integration, so I looked at what WBPP was using for rejection and it was Generalized Extreme Studentized Deviate (ESD). Some hunting around took me to a PixInsight forum where it was noted that ESD (using its default settings) wasn't doing a great job with satellites. So I told WBPP to instead use Linear Fit Clipping and that seemed to work better. Not perfect, just better. I will need to find out what ESD settings work best since overall it's probably the scheme to use. It may be that satellites and an image full of nebulosity are always going to be a problem.

I also learned that my usual haphazard application of the XTerminator family has been wrong. It's a processing sin to use NoiseXT before BlurXT and NoiseXT before SPCC. For this image I only applied NXT after taking the image nonlinear.

Here's my workflow for this project with the ">" symbol meaning "creates":

WBPP > Cropped channel masters

ABE (color channels) > Backgrounded color channel masters

ChannelCombination > RGB master

ImageSolve > RGB master with astrometry

SPCC > color-calibrated RGB master

ABE (luminance) > Backgrounded luminance master

BXT (luminance master and RGB master) > enhanced masters

STF and HT > nonlinear masters

NXT > de-noised masters

CurveTransformation (with gentle "S" curve) > enhanced masters

LRGBCombination > LRGB master

assorted tweaks (saturation, sharpness, contrast, etc.) > Finished image

Not shown is an additional DynamicCrop after the ABE of luminance because ABE was a little overaggressive at the left edge. Even with two crops, the final image lost only 4.2% off the short axis and 5.5% off the long axis for a 10% areal loss. The reproducibility of the image framing was impressive. Thank you, NINA.

Another lesson learned was that the XTerminators could be sped up quite a bit. Normally the necessary files are installed by XTs, but on my old computer the install did not engage the GPU. My graphics card is an NVIDEA GeForce GTX 1050 Ti circa 2018. This post explains how to upgrade a computer to use the GPU for faster XT performance. In my case it sped up the XTs by a factor of 4. I may need to repeat this every time XT does an upgrade.

So how did the processing work out? Mostly I was concerned that the area around Polaris was darkened by background extraction and didn't represent reality. I searched AstroBin for an image I could use as a sort of "ground truth" for what I had done. I found just what I wanted in an image by captured_mom8nts (which I'm guessing is not their real name). It appears to have been taken at a much shorter focal length and so should have suffered much less Polaris bloom, keeping the area around the star reasonably pristine. A little crop/rotate/scale/stretch and it matched my image's scale and orientation:

|

| Comparison: Mine (top), captured_mom8nts (bottom) |

I think it fairly obvious that the dark areas on either side of Polaris in my image match those in captured_mom8nt's image, even though mine is much deeper. I'm happy!

I'm also happy with the star color. Shooting only 90 s exposures may have been the key to that in that it kept stars from saturating. Next time I'll be shooting at f/2, but with a smaller objective so I may keep the exposure time as is.

-----------------------------------------

All the components of my new Samyang 135 mm f/2 imaging system have arrived or are on their way. Next time I'll have a picture of it all assembled and possibly already taken on its first test drive!